The Case of the Diverging Loss: When ‘Best Loss’ != ‘Best Model’

Our “best loss” checkpoint was not our best ASR model. This run made it clear that for ASR, WER and human review should drive model selection, not loss alone.

Key Takeaways

- Loss curves can look unstable while WER still improves.

- Epoch-boundary loss drops are not automatically a failure signal.

- Checkpoint selection should be tied to task metrics, then human verification.

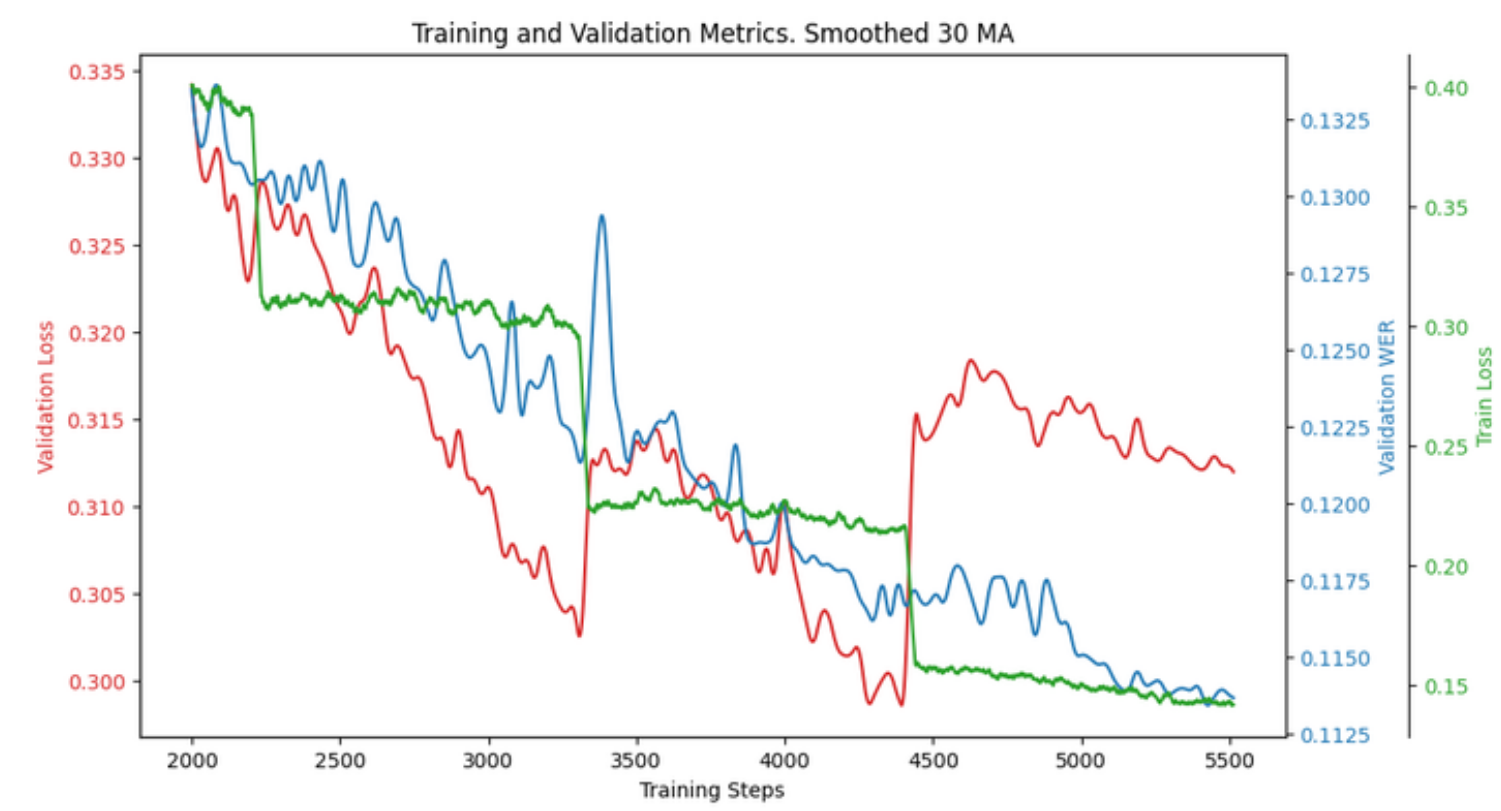

While fine-tuning Whisper, we observed a peculiar loss behavior that challenges the standard intuition of deep learning: the model’s training loss and its actual performance metric (WER) started to drift apart.

Training loss and validation WER diverging across epochs.

The “Epoch Collapse” Phenomenon

Looking at the chart above, you can see sharp, vertical drops in the training loss (green). We found that these coincide exactly with the start of a new epoch. As the training loop returns to the first batch, the loss “collapses” momentarily.

While visually striking, this isn’t necessarily a sign of failure. In fact, as the loss jumps and fluctuates, the Validation WER (the metric that actually matters for transcription quality) continues its steady decline.

The Practical Lesson: Don’t Blindly Trust the Loss

The most important takeaway from this run was that the checkpoint with the lowest validation loss was not the one with the lowest validation WER.

In many ASR tasks, the model might “overfit” to the specific nuances of the cross-entropy loss while still improving its ability to predict the correct tokens in a sequence. If we had simply picked the model based on the “best loss,” we would have shipped a sub-optimal transcriber.

Final Thoughts

This divergence highlights why automated metrics are only a compass, not a destination.

While WER gives us a better signal than raw loss, even it has blind spots-especially with Swiss-German dialects where spelling isn’t standardized. By the end of our research, we concluded that human-in-the-loop evaluation remains essential. Metrics helped us navigate the training process, but final judgment was always reserved for human ears.